A few months ago, I started learning how to code because I’ve always been interested in tech and decided that learning a computer programming language would not only make me more employable but also help me solve everyday problems.

What I’ve realized in the short time that I’ve been learning R and Python through my data science course, is that coding can automate monotonous or time-consuming tasks that people do daily at work so they have more time to focus on other things.

You don’t need to be a software engineer to automate your work tasks. In fact, I come from a journalism background and started studying data science because I have an interest in storytelling. Learning how to visualize data was something I became interested in when I was studying data journalism.

So I decided to write an article that can help you automate a couple of tasks that will hopefully not only make work easier but your everyday life too. If you are interested in learning how to learn Python, I would recommend downloading the Anaconda application on your computer so you can use Jupyter Notebook to store your code and your notes.

Automatically Move Files

This doesn’t seem like that hard of a task to do manually but if you spend ages going through your downloads folder tidying it up (like I used to) then this is a great task to automate. So before you start telling your computer which folder to send files to, you’ll need to import the ‘os’ and the ‘shutil’ module. This will allow Python to interact with your operating system and gives you the ability to remove, copy, and move files. If you’re using Juypter Notebook, it should look something like this:

There are a few different ways to move a file in Python but I’ll just use one as an example. First, you’ll need to tell Python where your file is located, which is called the file path. You’ll need to put the file path in brackets otherwise Python won’t be able to read it.

For example, if you have an Excel spreadsheet located in your downloads folder it would look something like:

‘/Users/Bob/Downloads/filename.xls’

The next part is you’ll need to identify the file path of where you’d like the file to go. For example:

‘/Users/Bob/Desktop/Work/Spreadsheets/filename.xls’

So to actually move it from one place to another it would look something like:

Run the script and then check if your file has moved! If it has – great! If it hasn’t moved and you’ve received an error, check spelling and file paths to make sure you’ve written the right code to find the file and move it.

If you have multiple files that you want to move, you can write scripts that can direct any file that is an image (.jpeg) or a Word document (.docx). To write those scripts you’d need to write a ‘for’ statement which is more complicated. There are plenty of YouTube tutorials to help you do that.

Scrape Data From Websites

If you’re not familiar with web scraping, it’s the process of downloading data from websites and extracting information from them. Web scraping is an invaluable skill that is becoming more in demand, so by learning it you could save yourself hours of work and it looks good on your resume.

Let’s say you work for an e-commerce company and you want to see the data on your competitor’s website. You’ll need to find a way to download it that’s in a usable format. Copying and pasting are fine for websites that only have a few pages but if you’re searching a large marketplace, for example, there will be hundreds of pages.

Web scraping can be used in a number of industries to speed things up including journalism, academia, finance, marketing, SEO, and business, just to name a few. By looking at data from websites you can power your understanding of content and use it to do competitor analysis, data-driven research, and make better-informed decisions.

Apart from using it in the workplace, data scraping is useful when you’re looking for a job. You can scrape the data from job websites so you get alerted when certain jobs become available. Of course, you can check them every day but that seems quite cumbersome, and also job alert emails flood too many inboxes.

There are a few different libraries to help you scrape the web, such as:

- Beautiful Soup

- Scrapy

- Selenium

- Request

Selenium can help you automate many things including automating your mouse, automatically logging into websites, and extracting data from PDF files. For this example, I’m just going to use Beautiful Soup but as you become more comfortable scraping websites and want to learn new things, play around with different libraries.

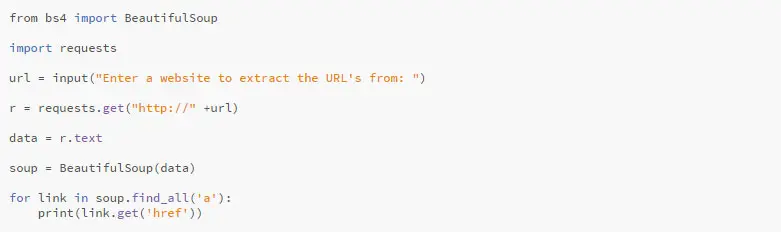

So for this exercise, I’m going to scrape all the URL’s from a website. Remember to open a new Jupyter Notebook so you can put in your Python script.

Once you’ve got a new notebook open. You’ll need to get Beautiful Soup in there. To make things easier, I’ve written the script out below:

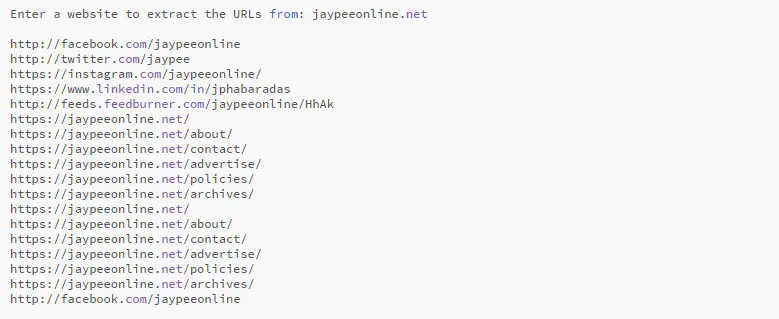

Now, when you run this cell, you should see something that looks like this:

Enter the website that you want to scrape. For this example, I’ll use the current website you’re on, Jaypee Online. Remember not to include the http:// as this has already been done for you. There was a big list for this website but this is a small snippet:

In closing, there’s a countless number of ways that you can use Python to make your life easier – and not just for work! There’s a bunch of tutorials you can find online that you can use Python to automate playlists on Spotify or automatically upload YouTube video links to Reddit. Remember that you don’t need to be a computer expert to code, you just need to be curious and use Python to solve a real-life problem.

This is a guest contribution by Annie-Mei Forster who works for an innovative tech start-up called Data Ventures in Melbourne. She’s also a freelance journalist and is currently studying a Graduate Diploma in Data Science. You can find her on LinkedIn or follow her on Twitter.

Great article!!

Python is relatively simple, so it’s easy to learn since it requires a unique syntax that focuses on readability. Developers can read and translate.

Thanks for sharing such a rich article.

Hi Serena, thanks for stopping by. I personally haven’t tried Python but I have heard about its simplicity and ease of use from other people. You’re welcome. Have a great day!